The Intelligence Misnomer: Notes on the Future of Higher Education from Mohali, India

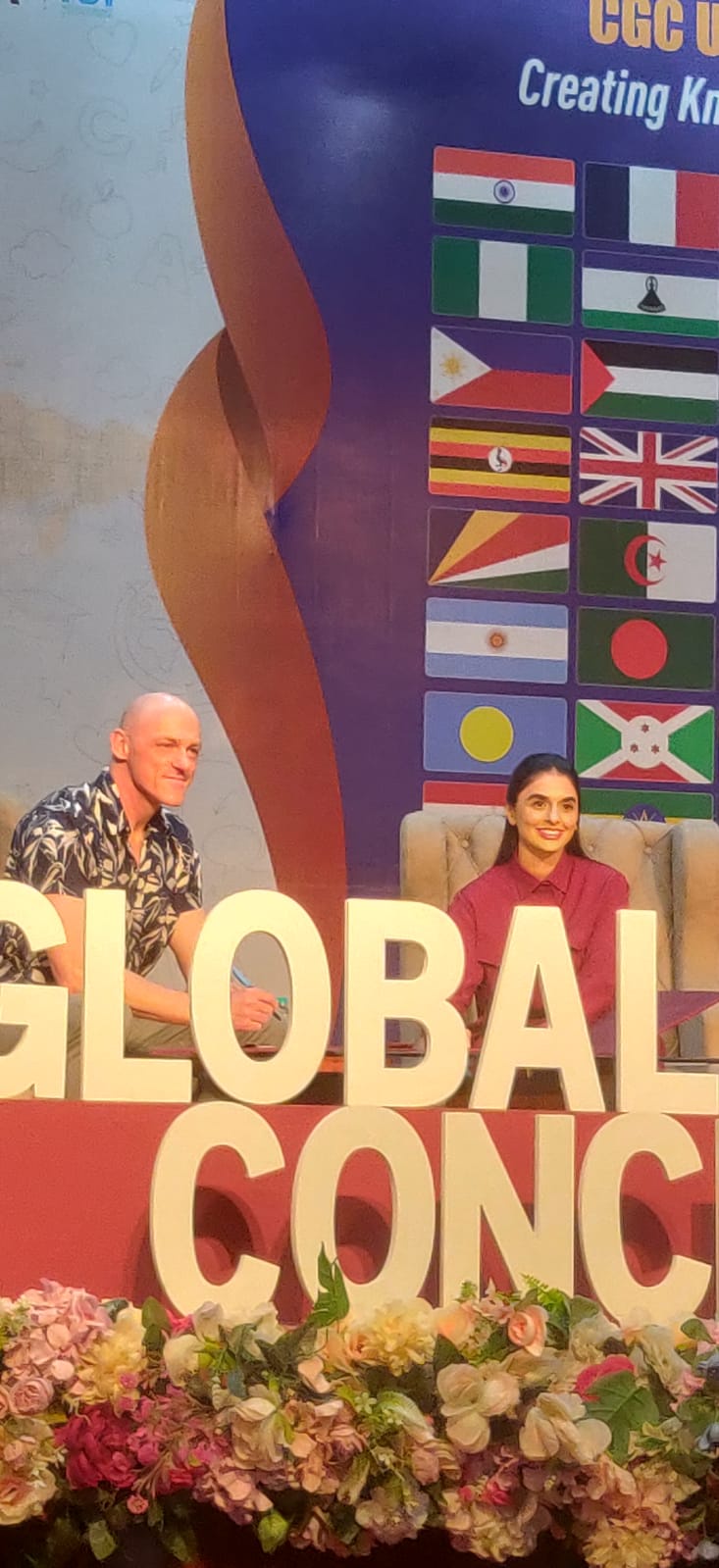

I was invited to speak at The Global Education Conclave 2026, hosted by CGC University in Mohali, that gathered 120+ delegates from 60+ nations under the theme “EduVerse 2050: Rethinking Global Academia for a New Human Epoch.”

This is a written version of my main talking points, edited after the conference. The text therefore contains both the narrative of my talk, along with reflections from the actual events and meetings during these intense days in Mohali, India.

Quick Overview

- Unique delegate structure – diplomats, scholars, and activists.

- Geopolitical diversity – only a handful of participants came from the West, allowing holistic and varied perspectives from all over the globe.

- Peace‑building through education – amid rising populism, the conclave panels underscored education’s role as a bridge between conflicted societies.

- Exploitation in the AI pipeline – annotation work for Large Language Models (LLM) training is often outsourced to workers in the Global South who reap none of the downstream benefits.

- Call for local models and data – the argument that culturally relevant, locally trained models are essential to avoid the “US‑centric” bias of current LLM services.

- “AI” is not the same as proprietary LLM services.

These threads weave together a coherent narrative: the future of higher education cannot be outsourced to opaque, profit‑driven, monocultural LLM-based platforms. It must remain a public good, rooted in critical thinking, cultural pluralism, and open scholarship free from commercial gatekeepers.

The conclave was unusual in the best possible way: diplomats alongside scholars with different perspectives on peace-building. It was very interesting to hear voices that outnumbered traditional US and Western Europe perspectives by a wide margin. That composition mattered. It shaped what got said – and what I learned.

LLM ≠ AI

My background is in AI and information technology. I have a Master's in Cognitive Science and a PhD in Computational Linguistics with a focus on interactive AI. I have spent 25 years putting AI technologies into use, both as a practitioner and as a researcher. You might expect me to be an enthusiastic advocate for initiatives like Gemini for Students or ChatGPT Education. I am not, and I want to explain why – carefully, because the argument matters.

My point was not that everything that the ”AI” umbrella covers is bad. AI as a field is far larger than LLMs and has been developing for at least 70 years with a multitude of approaches.

Instead, I wanted to point out something more uncomfortable: that the products currently being sold to our higher education institutions under the name “AI” is being systematically misdescribed, that the people selling it know this, and that students are ultimately the ones who will pay the price.

What the “AI Product” Actually Is (Currently)

The problem begins with the word “intelligence.” When a company calls a product “artificial intelligence”, we fill in the gap with a meaning we already understand. Intelligence: the capacity to reason, to understand, to form genuinely new ideas. That is what the word means to us. It is not what it means in the products currently being labeled AI. This is not a subtle distinction. It is a central misconception – and in the context of institutional adoption, it is closer to actual deception.

Now, LLM systems are technically large statistical models trained on enormous quantities of human-produced text. Text that were written by humans, for humans to read. The LLM learns the probability distributions of word (token) sequences. When given a prompt, they sample from those distributions to produce a plausible-looking continuation. That is the mechanism. Entirely. There is no reasoning. There is no understanding. It is pattern completion at massive scale.

The word “generative” has the same problem. In plain language it sounds like creativity, like something new being made. In the actual mathematical sense, generative only means the model approximates a distribution and samples from it. It cannot reach outside what it has seen. It interpolates and recombines within learned boundaries, and it does that with impressive fluency. But fluency is not understanding. When a model produces a coherent-looking summary of a historical argument, it has not understood the argument. It has produced a statistically plausible reconstruction of what a summary of that kind of argument tends to look like. It cannot tell you what the argument gets wrong. It does not know when it is outside its competence – which is why it fabricates citations and hallucinates facts with complete confidence.

The people building these systems know this.

The people selling them to our institutions and universities also know this.

The framing of “AI” as intelligence, as reasoning, as a thinking partner, is a marketing decision. And that marketing decision is now shaping academic policy at institutions that are supposed to be built on precision, source criticism, and rigorous thought.

Who Is Actually Selling It

When the conversation turns to “AI in education,” it is framed as if we were discussing a broad and open category of tools. We are not. In practice, we are talking about a handful of commercial services from OpenAI, Anthropic, Google, and Microsoft. These are not education companies. They are among the largest commercial platform companies in history, headquartered in the United States, operating under US legal frameworks (like the CLOUD Act, for example), with business models built on lock-in, data accumulation, and scale. When a university integrates one of these services into its learning management system, it hands a portion of the university's knowledge infrastructure to a commercial actor whose systems cannot be audited, whose behavior cannot be reliably predicted, and whose terms of service reserve the right to analyze behavioral metadata regardless of what the headline privacy promises say.

The Monoculture Problem

There is a structural problem here. These models are optimized for English and an American textual culture. When millions of students at thousands of institutions worldwide are using the same two or three closed models to research, summarize, and draft, the result is a global homogenization of what knowledge looks like – and that homogenization flows outward from a single cultural center. This point landed hard in the conclave’s multicultural context, and rightly so.

The conclave's composition – delegates from across Africa, Asia, the Middle East, and Latin America – foregrounded what is usually politely left aside in Western discussions of EdTech adoption: these tools were not built for most of the world's students, do not reflect most of the world's intellectual traditions, and the people doing the low-wage annotation work that makes them function are typically from the Global South and benefit from them the least.

Sitting next to two of my esteemed fellow panelists from Ethiopia and Nigeria – one of the most incisive points raised in my panel was the urgent need for local models, local data infrastructure, and local governance. The reason is simple: contemporary models carry very little meaningful context for the majority of their global users. This is a structural failure.

Education as Resistance

The researchers and educators who used to determine what counts as rigorous analysis are being gradually displaced by the probability weights of commercial systems optimized for plausibility, owned by companies optimized for growth.

Universities stand for open science, source criticism, and reproducibility. We risk building pedagogy on closed, non-replicable statistical systems that we cannot scrutinize and did not choose on educational grounds. The pressure to adopt these tools combines three forces: fear of being seen as behind, funding tied to adoption, and the absence of organized faculty resistance at the moment decisions were made.

None of those forces is an educational reason. And this is happening at a moment when higher education is already under attack from populist movements that question its value, its legitimacy, and its purpose. The Palestinian ambassador's framing – “education as resistance” – was not just a slogan. In a room representing 60 nations, many of them navigating serious political pressure, it summarizes what is at stake. Surrendering the epistemic foundations of universities to unauditable commercial systems is not a neutral administrative choice. It is a capitulation at exactly the wrong time.

What We Can Do

Three positions:

First, demand real technical literacy before adoption. Before your institution deploys any of these tools in a learning context, someone with genuine technical knowledge – not a vendor representative – should be able to answer in plain language: what does this system actually do? What are its known failure modes? What data does it collect, and what do the actual terms of service say? If those questions cannot be answered clearly, adoption should wait.

Second, protect the process. Design assessment for process visibility. Oral examinations. Iterative drafts with documented revision. In-person discussion of written work. Assignments that require engagement with specific sources a model cannot have accessed. These are pro-learning positions, and we know they produce the outcomes education exists to produce.

In the panel I offered: ”You do not send a robot to the gym to do the lifting for you. The friction and struggle are the point. An LLM service, used without reflection, is the direct opposite of that. It removes the resistance that builds intellectual capacity – and it makes students and scholars dependent in the process. Reading deeply and discussing even more deeply is what matters. That has not changed.”

Third, say out loud what you actually think. There is enormous pressure in academic institutions to perform enthusiasm for these tools, or at minimum to avoid being publicly critical. Push back on that pressure. When adoption decisions are being made in your departments, show up and say clearly what the evidence says and what your professional judgment is.

The companies selling these products are extremely loud. Educators and guardians of knowledge and critical thinking need to be louder.

In Closing

We are being pushed toward a version of higher education where knowledge is a product to be delivered, learning is a transaction to be optimized, and the university's role is to credential people who have learned how to prompt proprietary AI services. That is not higher education.

What happens next will not be determined by what OpenAI or Google builds. It will be determined by what you decide to defend — in your classrooms, your departments, your institutions.

Several delegates cited Nelson Mandela’s point that education is the most powerful weapon for changing the world. He was right. But such weapons require the person holding them to have judgment, skill, and the strength built from genuine effort. That strength does not come from outsourcing your thinking to machines. It comes from doing the intellectual work yourself.

The wisdom is already in our culture. Such as in novels, like this Frank Herbert quote from Dune in 1965(!):

"Once men turned their thinking over to machines in the hope that this would set them free. But that only permitted other men with machines to enslave them."

The Global Education Conclave 2026 was held at CGC University, Mohali, India. My specific panel addressed the intersection of AI technology, pedagogical integrity, and global educational sovereignty.

Thank you to the wonderful organizers and CGC University Mohali for creating this international platform for conversation.